It seems like everyone in Korea is talking about AlphaGo these days. AlphaGo beat Lee Sedol, a 9-dan professional in the game of Go, with a final score of 4 to 1. Many people see this as an event where artificial intelligence beat a human player. Reactions vary from excitement to bitterness and anxiety. I’m not sure if I should be happy or bitter about the everyday advancement of computers, but I’m an outsider when it comes to the board game Go and I’m not too familiar with artificial intelligence. Perhaps I shouldn’t be writing a post with the title “AlphaGo and the Future of Translation.” However, I decided to give it a go after someone challenged me to try writing about this topic. I was asked to think about the continuous development of artificial intelligence and its effect on translation. Will artificial intelligence eventually replace translators? I don’t know much about Go or artificial intelligence, but I do know a little about translation. Let me give you my thoughts, foolhardy though they may be.

AlphaGo’s triumph is overrated

Before I begin, I’d like to applaud the 9-dan professional, Lee Sedol, for his willpower, concentration, and skill. I heard that AlphaGo is a network of 1,202 CPUs and 176 GPUs with a cost of 10 billion won. For someone who’s pretty bad at even beginner level computer games, I think it’s amazing and admirable that a person can play Go with AlphaGo. I like strategy games. I taught my son omok, checkers, chess, StarCraft, Go-Stop, monopoly, etc., even though I’m not very good at those games myself. My son was able to beat me at every game after a few months. He was only in elementary school at the time. Frustrated, I retired early from all kinds of games. Monopoly is a strategy game, but luck is also very important, so I still play it from time to time because I still have a chance of winning. I can only admire Lee Sedol and AlphaGo, and I’m not really in a position to evaluate their historical match.

However, I think AlphaGo’s triumph is overrated. I’m not devaluating the capabilities of artificial intelligence because I wouldn’t even be able to measure the depths of AlphaGo’s capabilities. However, AlphaGo is a computer. What happened is, AlphaGo beat a human at a game that it’s really good at. I can’t swim very well. I may improve if I practice, but that doesn’t mean that I’ll be able to race with dolphins one day. That will never happen. Simply put, swimming is not my game; it is a dolphin’s game. Even if Michael Phelps beat a lazy and distracted dolphin, would it be a big deal for dolphins or humans? If Usain Bolt beat a really lazy and unmotivated cheetah, would that be such a big deal for cheetahs and humans? What’s the point of comparing the abilities of a bird, fish, and cheetah? They all have their own game and what they are meant to do very well.

I want to point out that computers and humans are good at different things. Go is a complicated game with a greater number of outcomes and moves than chess. It takes a long time to master Go—it involves probability and calculation of data. A good Go player needs to be able to quickly calculate many different outcomes and moves and choose the most favorable one. I think computers specialize in these skills. I’m not trying to belittle AlphaGo’s abilities, but computers are usually good at storing memory, analyzing data, calculating probability, and considering various cases (almost) at once. All these skills are required to be a good Go player. In my opinion, it’s not such a huge deal that AlphaGo won. Rather, it’s a miracle that a human was able to beat a computer made up of 1,202 CPUs and 176 GPUs, even if it was just once. It’s a spine-chilling and tremendous event.

I don’t think we should overestimate AlphaGo even though it beat Lee Sedol. Computers can be really smart, but also really stupid. AlphaGo has worked hard at inputting data, analyzing it, and calculating it, but during all that time, it didn’t even know that it was playing a game called Go. It also didn’t understand who Lee Sedol was. It didn’t know that its match with Lee Sedol was drawing so much attention in Korea. In short, AlphaGo doesn’t know anything beyond its area of expertise. Computers are amazing, but they are still ‘idiot savants’.

[bctt tweet=”Computers are amazing, but they are still ‘idiot savants’.” username=”HappyKoreas”]

We can’t declare that it’s absolutely impossible for artificial intelligence to conquer translation as it did with Go, but in reality it’s nearly impossible for a few reasons

We can assume that because artificial intelligence played Go well, it will do well in a lot of similar fields. Of course, humans will need to help it along the way. The development and advancement of artificial intelligence promises a bright future for humans. In which fields, though? In the US, artificial intelligence developed by Google is already able to drive cars in the streets. In Ontario, Canada, where I live, laws are being modified to accommodate cars driven by artificial intelligence. It won’t be long until I’ll be able to see these cars being driven in my neighborhood, though it may be a while until prices go down so that they will be accessible to a wider group of people. There are so many examples. I’m interested in medicine. I wonder if there will come a day when artificial intelligence will be able to provide a diagnosis like a doctor can. It’s difficult to become a doctor, and they are well respected because they invested a lot of time studying medicine and accumulating experience. I think diagnosing a patient means performing analysis using logical deduction and statistical probability with the specific data of an individual patient. If this is true, there’s no reason why artificial intelligence won’t be able to do the same. Computers are good at remembering great amounts of data, analyzing it, and choosing the factor with the greatest probability. When ambiguity is involved, a computer will be able to provide a list of options based on probability. I think this is quite possible.

That said, I don’t think we can assume that because computers showed great progress in an area they excel at, that they will excel in other areas as well. I highly doubt that artificial intelligence will be able to translate. Not because I think what I do is more difficult that what Lee Sedol does, but because understanding and using language is not an area where computers excel. Some people may think languages aren’t that difficult because most people are able to learn a language (their mother tongue) easily. In reality, however, languages are highly complicated. Languages are multifaceted and multilayered enough to say that understanding a language is like understanding humans. Languages seek accuracy, but they also continuously seek innovation, grow, modify existing words and phrases, and link words and phrases in the wrong places. It’s impossible to regulate and standardize language. Analogies, metaphors, satire, jokes, and sarcasm can only be understood if the listener can empathize with the speaker. There are endless examples, but if you are reading this, I think you know what I mean. I highly doubt that artificial intelligence like AlphaGo will be able to understand this concept. Translation involves converting one complicated language into another complicated language. Furthermore, because the historical background and culture of both languages are different, translating is not a typical complicated task. Language is a method of thinking and a way for thoughts to expand, and that’s why people who speak different languages think differently. Two people who use different languages (from two different cultures) don’t think differently using the same frame—they use different frames to think differently. The frame for understanding objects or phenomena are different for different languages. Words that refer to specific objects (eye, mother) may correspond. (For simplicity’s sake, I omitted homophones. But even without homophones, the feel, positive and negative connotations, degree of formality, and expansion of the word in different contexts may be distorted or taken away when a word is translated.) But beyond specific nouns, even abstract nouns are difficult to make correspond perfectly. Because abstraction occurs within a culture using a specific language, there are many abstract concepts that exist in one culture that don’t even exist in another. For example, in the Korean language the term ‘갑질 (gab-jil)’ was formed within a specific context in Korean culture. Thus, it’s very difficult to find a word that contains all its meaning in English for North American readers. Similar expressions can be used to convey the general meaning, but many things are lost in translation. No one expression is correct and the use of an alternative is always possible. Now, let’s take the English verb ‘involve’, The word ‘involve’ is used in everyday conversations in English, but an accurate translation for this common word does not exist in the Korean language. Different Korean words must be used depending on the situation, or phrases (multiple words) must be used to convey the same meaning. An in-depth understanding of the source text is required, along with the ability to re-formulate and re-imagine in the target language. Calculation formulas are useless in this context. And remember, we are still talking only about individual words here — things get even more complex when we move on to sentences. Machine translation is awkward because expression of thoughts is different for each language and culture. A literal translation of the greeting, “Good Morning!” into Korean (좋은 아침!) may be accurate, but it’s not natural, nor does it convey the intended message. That’s because this greeting is expressed in different ways in the two languages. Let’s take another example. A literal translation of the English sentence, “Well, I am not exactly the nicest person.” in Korean is “글쎄, 나는 가장 친절한 사람은 아니다.” This translation may be grammatically accurate, but it really does not get the meaning across. In English, the speaker of this sentence is someone who’s made a habit of rude behaviors and does not regret it. He’s actually saying, “So what?! Sue me!” The literal Korean translation does not express this emotion. Lastly, in English, saying, “Never mind” can just simply be a harmless “just leave it” depending on the context, but when said with a sigh, it can mean “just leave it, you idiot,” said by someone who’s expressing contempt for another person’s incompetence or lack of interest. Language is very much rooted in context, social customs, and culture, and cannot be output by calculating the probability or number of cases. Unexpected new expressions can also come into being in a very short time because everyone wants to fit in and imitate one another in a society. Even great expressions can become stale if they are used for too long or for too often. Languages evolve due to various events, changes, and trends in a society. I’m sure the difference between language and translation and a Go game is quite clear. For the reasons mentioned above, translation is extremely difficult even for humans.

[bctt tweet=”Languages are multifaceted and multilayered enough to say that understanding a language is like understanding humans.” username=”HappyKoreas”]

[bctt tweet=”Language is very much rooted in context, social customs, and culture, and cannot be output by calculating the probability or number of cases.” username=”HappyKoreas”]

Let’s change the topic a little bit and talk about machine translation. It’s easy to mock machine translation and say how ridiculous it is. (But it’s difficult to give up machine translation. Machine translation will probably become a more integral part of our lives.) You mustn’t think that machine translation and artificial translation are the same thing, nor that current machine translation will develop and become artificial intelligence translation. To understand this better, we need to understand the current principles of machine translation. Many people still think that machine translation involves an enormous computer owned by a large company like Google that analyzes the input language, makes some sort of calculation, and then substitutes the source language with the target language. Several decades ago, there was such a model used to develop machine translation, but most people have given up on that method. The output of current machine translation does not result from logical reasoning based on the rules of grammar, but statistical choice based on a vast amount of data. (Some parts are hybrid, however.) Why is this the case? Why invest in billions of dollars for over thirty years only to give up? It’s because language is not as simple as it seems, as I’ve mentioned above. In my opinion, it’s wiser to rely on statistical selection based on vast amounts of data. I think machine translation will develop in this direction in the future. But you know what? Machine translation will not be able to catch up to human translation even in a hundred or a thousand years. It has great potential, but it will not be better than human translation. This is because machine translation is still going to be a statistical choice. Society, culture, and language are always changing. Therefore, machine translation will always be catching up to humans, and humans will always be teaching it. There will never be a “perfect machine translation” whether or not artificial intelligence is involved.

[bctt tweet=”There will never be a “perfect machine translation” whether or not artificial intelligence is involved.” username=”HappyKoreas”]

The future does not belong to those who worry but those who enjoy life and prepare

I spent a lot of time thinking about how I should conclude this post. I wondered if I’d written too much about a topic I’m not so knowledgeable about… but I’ll summarize my thoughts here briefly.

1) In my opinion, there’s still a long way to go until computers will be able to master the human language or translation (at least for now). I’m not belittling the IQ levels of artificial intelligence, but language and translation are just not areas where artificial intelligence can excel. Who’s to say what will happen in the distant future? And how much can these predictions about the distant future affect the current translators and those thinking about becoming translators now? Let’s say that in fifty years, (this is an exaggerated estimate) artificial intelligence will be able to translate at a significant level which leads to a rapid decrease in translation prices. Will you then change your job to a safer one because of such a prediction? Which job will you switch to, then? If there does come a day when artificial intelligence is able to translate as well as humans can, what won’t they be able to do in the future?

[bctt tweet=”I’m not belittling the IQ levels of artificial intelligence, but language and translation are just not areas where artificial intelligence can excel.” username=”HappyKoreas”]

2) Even if artificial intelligence can learn language like humans, it would be very difficult for this to actually happen. The technology for electronic cars had already developed in the 1970s, but electronic cars didn’t actually come onto the market until 2010. In the 1960s, humans successfully went to the moon, but after that incident, no one else did. Why? For something to materialize, there are a lot of factors other than technology that are required and there are a lot of obstacles. There needs to be political and economic support and this support needs to be greater than the obstacles. Having said this, I’m not sure if there’s enough motive to develop artificial intelligence that can understand language at the human level. The human race has yet to solve the problems of famine, terrorism, war, drought, climate change, and diseases such as cancer and AIDS. Will there be anyone willing to invest an enormous amount of resources in order to develop artificial intelligence that can learn languages? (Even if artificial intelligence masters one language at the human level, it’s another thing to master two different languages.)

3) Change in technology is likely to bring great changes in all areas of society, not just translation. There’s no need to look at this negatively. Think about the changes in the medical field we discussed earlier. Would it be so bad if artificial intelligence could diagnose patients? Will doctors face mass unemployment? I’m not entirely sure, but I don’t think we need to worry that much. First of all, it would be a net positive for everyone if artificial intelligence were able to provide more accurate diagnosis compared to human doctors (in general). It would mean that more people can receive quicker and cheaper medical care. And we don’t know for sure if the number of doctors would increase or decrease if such a day were to ever come. The role of doctors would change, in the same way that the role of doctors changed with the development of the MRI or x-ray. Doctors will have to evaluate, monitor, control, and manage artificial intelligence as well as explain diagnoses made by artificial intelligence to patients and provide advice. I think it’s absurd to think that there will no longer be doctors. With easy, quick, and cheap diagnosis by artificial intelligence, there will be fewer quack doctors who habitually misdiagnose, but the role of doctors will still be important and they will take on many other roles that they are not performing now. Education in medicine will have to prepare for such a future.

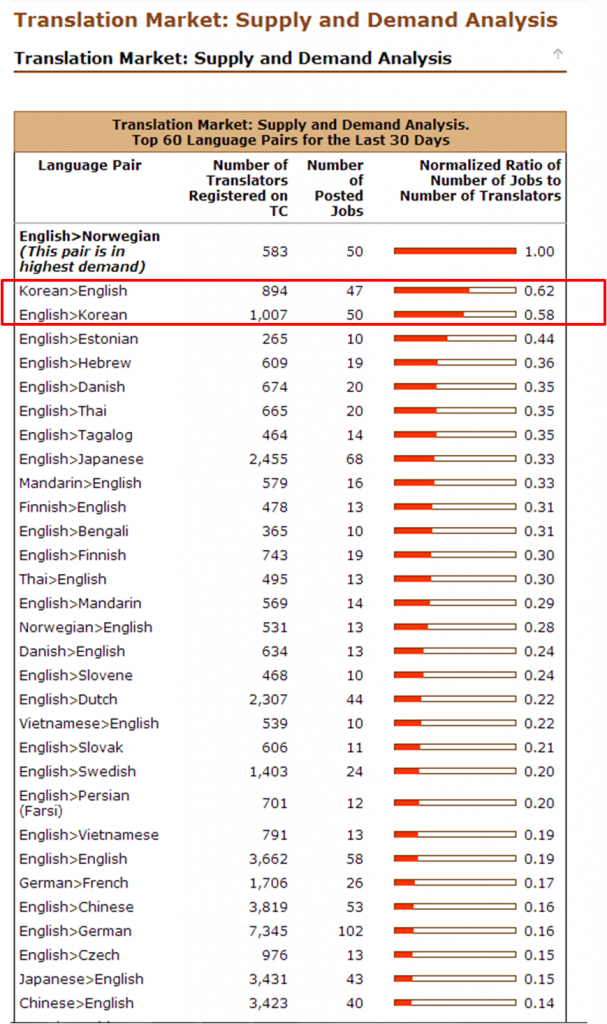

The same could probably be said for the translation industry. Has the quantity of translations decreased and have many translators become unemployed since the development of machine translation? Has the price of translation decreased because people can buy adequate translation services easily and cheaply? No way. Perhaps “draft translators” who can only produce machine translation level work have no more place in the market (but actually there was no place in the market for these translators even before machine translation). But the demand for high quality translation and interpretation has only exploded as more people understand foreign languages better and communication between languages increases. My guess is that this is the best it’s ever been for translators, and I think this trend will continue in the future. I’ve seen translators despise machine translation, but never anyone who’s feared it. And as I’ve mentioned previously, machine translation will always trail behind humans but never catch up. With that said, machine translation is not a threat to translators, but a friend as it is quite useful if used properly. I hope the quality of machine translation increases quickly, so that I can use it more than I do now.

[bctt tweet=”Machine translation is not a threat to translators, but a friend as it is quite useful if used properly.” username=”HappyKoreas”]

I want to tell translators and those aspiring to become translators not to worry. We are not competing with artificial intelligence but with ourselves. Instead of worrying about that, worry about how you can understand other people, cultures, and ways of thinking better. I used the term ‘worry’, but it’s not really a worry. Curiosity is part of our human nature. For those of us who enjoy stretching and exercising our minds as well as researching and setting out on adventures, it’s no longer worrying. (My method of ‘worrying’ is watching movies, reading comics, chatting, looking up the dictionary, reading books and magazines, asking people questions, translating (of course), etc.) People request translation and pay us money because we are fascinating and strange (especially to artificial intelligence) people who spend our time ‘worrying’ every day.

우연히 사이트를 알게 되어서 반갑습니다. 영어에 관심이 있어서 감사히 도움을 받겠습니다.